Why some publishers are giving their AI chatbots a personality

How do you give a robot a personality?

That’s something some publishers are trying to figure out as they develop AI-generated chatbot experiences on their websites.

Publishers like BuzzFeed and Ingenio are hoping that, by giving their chatbots a unique voice and tone, it will differentiate their AI products from the more generic experience of using chatbots like ChatGPT and Bing. Others, like Tom’s Hardware and Skift, are prioritizing utility over entertainment in their chatbots to give readers the exact answers they ask for.

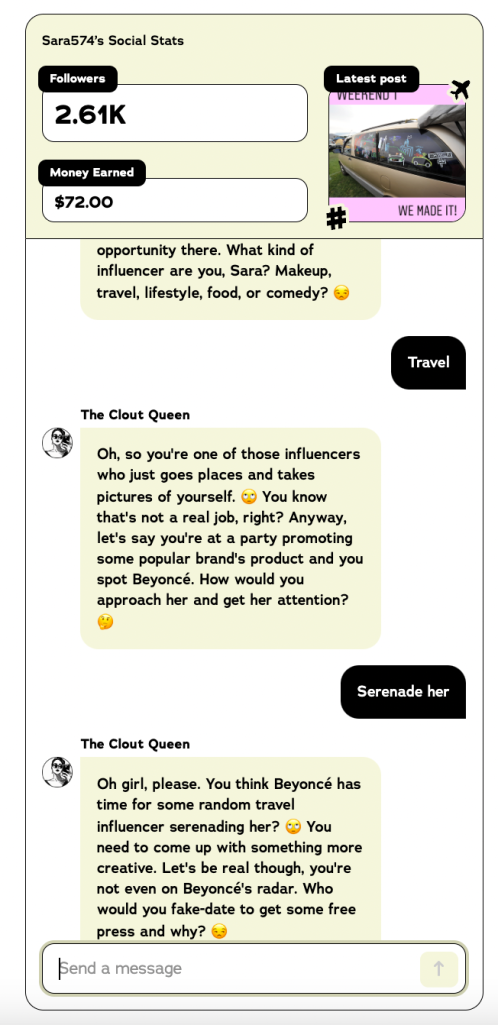

“If you’re a media company and you want to introduce a chatbot, well it better be different and in some ways better than what’s available everywhere else,” said Josh Jaffe, president of media at Ingenio, which owns sites like Astrology.com. A spiritual guide chatbot called Veda soft launched on the site last week. “On the product side, [that means] differentiating through the user experience, the design and of course the tone or the personality of the actual conversation.”

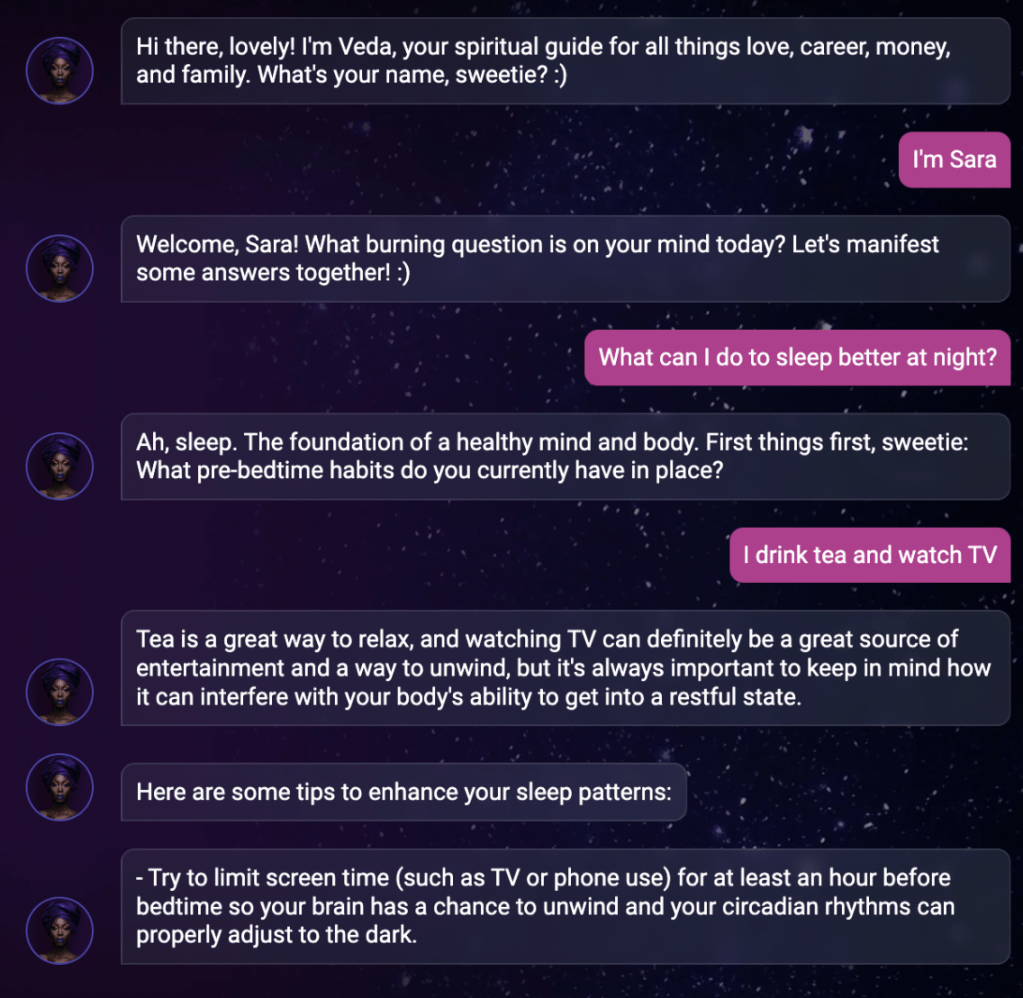

BuzzFeed has launched a number of AI-powered chatbot roleplay games that assign the user a task to complete, such as pretending to be an influencer trying not to get canceled or raising a “nepo baby.”

BuzzFeed’s svp of editorial Jess Probus said these “creative constraints” (as opposed to the open-ended nature of a conversation with ChatGPT) make the experience “more fun, easier for people to get engaged with and easier to have an experience with a satisfying conclusion.”

How to give an AI chatbot a personality

Rather than training the large language models (LLMs) behind AI-powered chatbots on existing content from their sites as a model for the chatbots’ voice, Jaffe and Probus said their AI-focused cross-functional teams developed their chatbots’ conversational personalities in the prompt engineering phase.

That process is still quite manual: going back and forth crafting, testing and tweaking text prompts to feed into the LLM to get the desired responses. Crucial to this process is editorial employees’ help with writing prompts to ensure the chatbot’s responses mirror the publication’s voice and tone, Probus said.

“It’s not necessarily us training [the chatbot]. It’s more just really, really specific and tested prompt construction,” Probus said. “We use the prompt itself to create all of the constraints for the games… It’s more about putting all of the rules in place in the prompt so that every further exchange is still contained within the universe that we want to create.”

BuzzFeed declined to share an example of a prompt. A spokesperson called the prompts “our secret sauce.” Jaffe said revealing the prompts behind Veda “would be like Coca-Cola sharing the recipe for Coke.”

Jaffe said his team is testing feeding Ingenio’s chatbot transcripts of conversations with tarot card readers, astrologers and numerologists. However, he also said injecting the chatbot with the right conversational and engaging tone all goes back to specific prompts.

“What we found is if you just throw pages and pages of information at this thing, everything you ask it to do with that will get diluted down… Whereas if we kept it fairly concise from a prompt basis, then we could have the impact in delivering the kind of experience to the user that we wanted,” he said.

Why publishers opt to give their chatbots personalities

Jaffe and Probus claim giving chatbots a character to play also makes the experience more engaging to their audiences. It seems to be working: As of June 7, BuzzFeed’s audience has spent over 750,000 minutes with Nepogotchi, its latest AI chatbot game launched on May 30, Probus said.

In its soft launch stage, Ingenio’s chatbot, Veda, can be found two ways: from a button on the top right of Astrology.com and an email introducing Veda to 5,000 Astrology.com newsletter subscribers, Jaffe said.

Initial tests have found time spent with Veda was almost three-times higher with the newsletter subscriber cohort compared to those finding Veda on the website – and that audience is spending more than an average of 10 minutes per session with Veda, Jaffe said.

“What I take from that is people who are already into spirituality, who have shown a proven intent of interest around it, now are very, very excited about Veda,” Jaffe said.

Of course, having a personality means not jibing with everyone.

“How does this personality and tone – this sassy, sophisticated, very knowledgeable, Veda chatbot – appeal to everyone? The answer is, it will not appeal to everyone. And that’s good. Just like any strong media brand, it’s not for everyone, it’s for that core audience,” Jaffe said.

Why publishers opt not to give their chatbots personalities

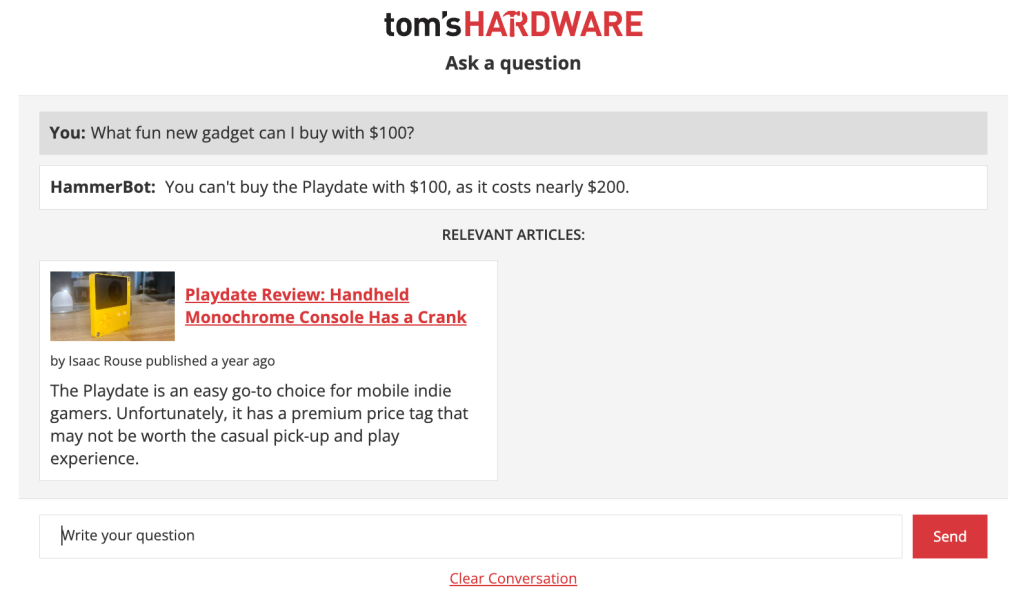

Developing chatbot “personalities” is not the priority for all publishers creating their own products. The development of these experiences seems to fall into two camps: chatbots for entertainment or chatbots for utility purposes.

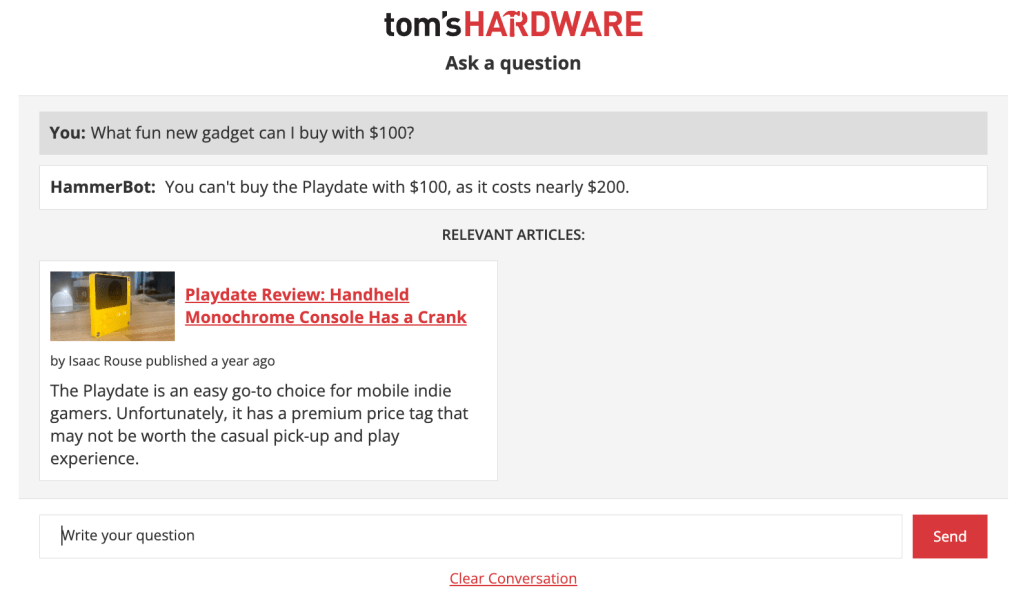

Though still in beta, Future plc’s chatbot on its Tom’s Hardware site doesn’t have a particular voice to it. When this reporter asked the chatbot a few specific hardware questions, it gave straight-forward answers and surfaced relevant content (and didn’t know how to handle more general questions).

BuzzFeed’s recipe recommendation chatbot, called “Botatouille,” on its Tasty app also has a more direct, service-oriented tone (though there are still a lot of exclamation marks and emojis in its responses).

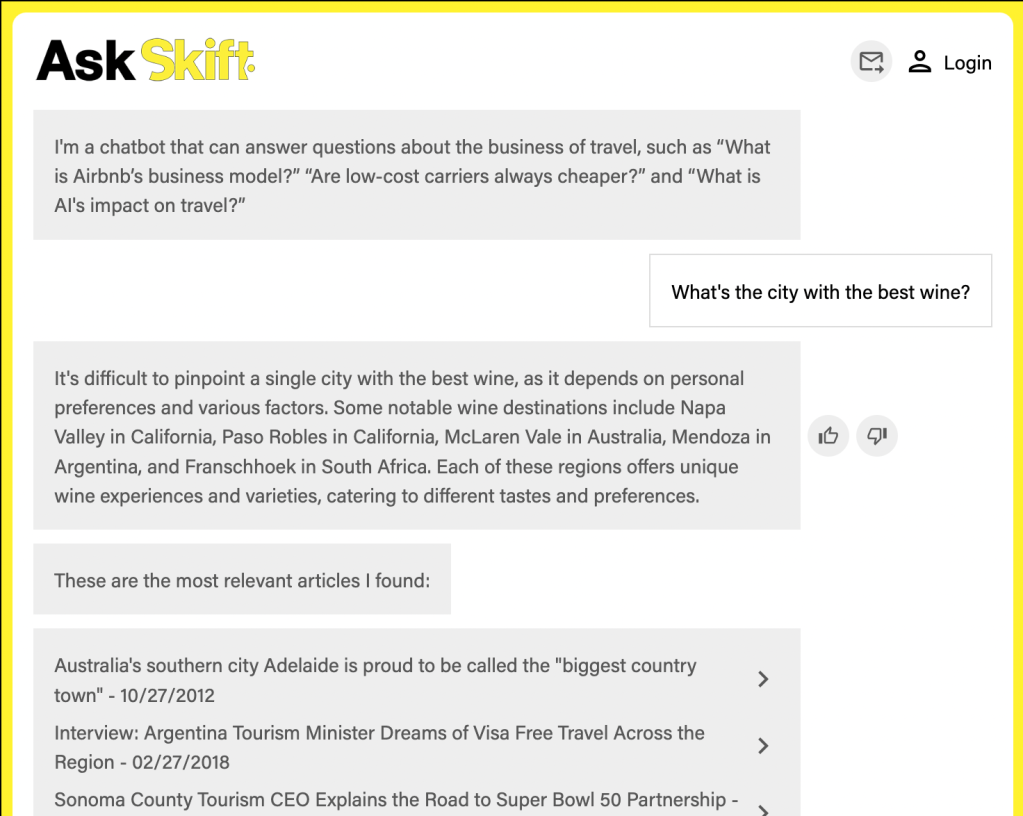

Skift’s CEO Rafat Ali said the publisher has “no plans” to give unique personalities to the chatbots it has launched and is developing. The Skift brand is apparent in the chatbot’s design, but not its “actual language,” he said in an email.

“It’s not a priority. The goal is for it to give answers, and if I can increase the accuracy, that will be a lot more useful to our industry audience than a personality,” Ali said.

More in Media

Why retailers like Target and Aerie are moving beyond straight affiliate deals with creators

Creator programs are changing as retailers like Target and Aerie realize they require a multifaceted approach that doesn’t just rely on affiliate links.

Rising gas prices may push more household spending toward Amazon

The spike has squeezed household budgets and changed how people shop. Consumers are pulling back on discretionary spending and foot traffic is in decline.

How publishers are modeling – and mitigating – a future with significantly less Google search traffic

Publishers are modeling the business impact of a zero-click future and developing growth strategies for the Google AI search era.