The rise of deepfakes poses a new trust challenge for publishers

As AI deepfakes spread faster and become more difficult to detect, publishers are caught in the crosshairs, with fact-checking teams working harder to verify what’s real and protect their organization’s credibility in an internet flooded with AI-generated content.

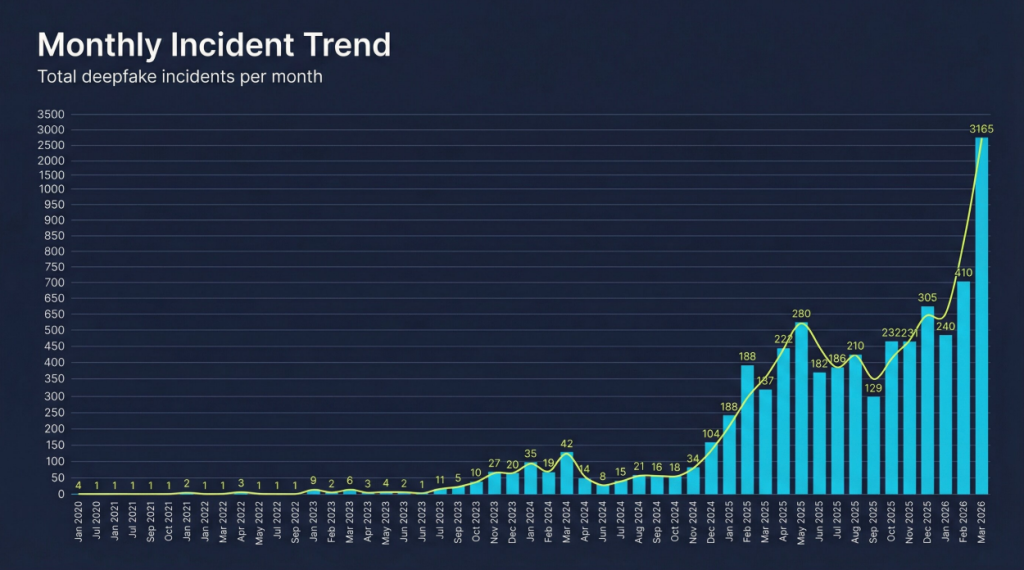

Fresh data shows the deepfakes are spiking. A new report from IdentifAI, a startup focused on detecting deepfakes and other AI-generated or AI-altered content, identified 3,165 incidents of deepfakes in March 2026 alone, up from just four in January 2020.

The report, which was based on 10,000 deepfake incidents, spotlighted a clear escalation: synthetic media and manipulation have entered a more dangerous phase globally, with deepfakes fueling political instability, enabling large-scale financial exploitation, and shaping public perception. The incidents were often amplified by social media algorithms, according to the report.

Deepfakes and concerns around AI-generated content fueling the spread of misinformation aren’t new. President Trump’s first U.S. presidential election cycle sparked a battle against disinformation and fake news, especially at a time when trust in legacy news media was eroding.

But the latest boom has been compounded by generative AI tools that make it cheaper, easier and faster to create deepfakes, and the scale has made it harder for news organizations to keep up and determine what is real and what is manipulated — especially during breaking news moments.

It’s the next phase in the misinformation wars that spread like wildfire in 2016 and beyond. Reuters recently reported on AI deepfakes making their way into political campaigns for the U.S. midterm elections this year.

AP News verification editor Barbara Whitaker said she’s seen an increase in “AI-generated false and misleading visual information,” particularly during the recent war in Iran. While most of this increase is coming from “AI slop,” or low-quality, high-volume content that is easier to recognize, her team also works to verify the authenticity of content found to be deepfakes. But that hasn’t changed the work AP Fact Check does — it’s just made it more difficult to do.

“For the more sophisticated AI-generated photos and videos, including deepfakes, we address it as we always have. We use standard verification tools, reverse image search and we consult relevant experts,” Whitaker said. “One of the biggest challenges at the moment is the improving quality of this content. Some of the old tells, like too many fingers or missing physical elements, don’t exist anymore, making it more difficult to assess what is authentic.”

Media consultant Tom Bowman, and advisor for IdentifAI, said the risk is especially high for publishers with subscription businesses. People paying for news expect factual, verified information in an environment where misinformation and disinformation are being amplified on social media platforms — and so the stakes are higher to get it right.

“The genuine risk to news organizations is if they just get lumped in as being just as bad as social media,” said Bowman, who is also a former vp of strategy, operations and global ad sales for the BBC. “The [IdentifAI] report is saying that journalism is massively under pressure. It’s not that journalism is failing, it’s that journalists alone — humans alone — can’t absorb the full verification burden. They need tools, [and the tools have to act] in near real time.”

Publishers like AP News and the BBC have large teams dedicated to verifying the authenticity of content — the BBC Verify team, for example, has roughly 60 reporters — but not every publisher has the resources to build that kind of operation.

Bowman said one of the biggest, growing threats to publishers is what he called “fake PR and contributors,” or the phenomenon of people using AI to generate expert-sounding commentary on a range of topics, fooling journalists at major news outlets. The trend was spotlighted in a Press Gazette article, which found examples of these dubious specialists quoted in publications like Business Insider, The Guardian and Vogue.

According to IdentifAI’s report, AI-generated video made up the bulk of the deepfake incidents the company tracked (45.6%), followed by mixed formats at 25.2%, still images at 17.4%, voice cloning at 10.5% and text generation at 1.3%.

Most of these incidents occurred in the U.S. (46.9%), followed by the U.K. (8.2%), India (7.2%) and Israel (6.6%).

In March, NewsGuard identified 3,006 AI “content farm” news and information websites, which churn out dozens of articles a day laced with misinformation, and are ad-supported.

The Reuters Institute forecasted that this year will bring an increased demand for verification in newsrooms, and that credibility will differentiate news outlets. Audiences will seek evidence and sourcing to support what they are seeing online, and news publishers have an opportunity to meet that demand, according to the report.

In addition to the implications around political elections, deepfakes are often created for “impersonation for profit” — using someone’s likeness (a politician, media personality, or celebrity) to endorse a product or platform, shared on social media, and then used to sell something, according to an analysis from the AI Incident Database.

YouTube made its deepfake detection tool widely available last week, so that anyone — but particularly actors, athletes, creators, musicians and politicians — can identify and request the removal of deepfakes on its platform.

Deepfakes target journalists too: between December 2023 and December 2025, Reporters Without Borders (RSF) documented and studied the cases of 100 journalists targeted in 27 countries. Women accounted for 74% of the cases studied.

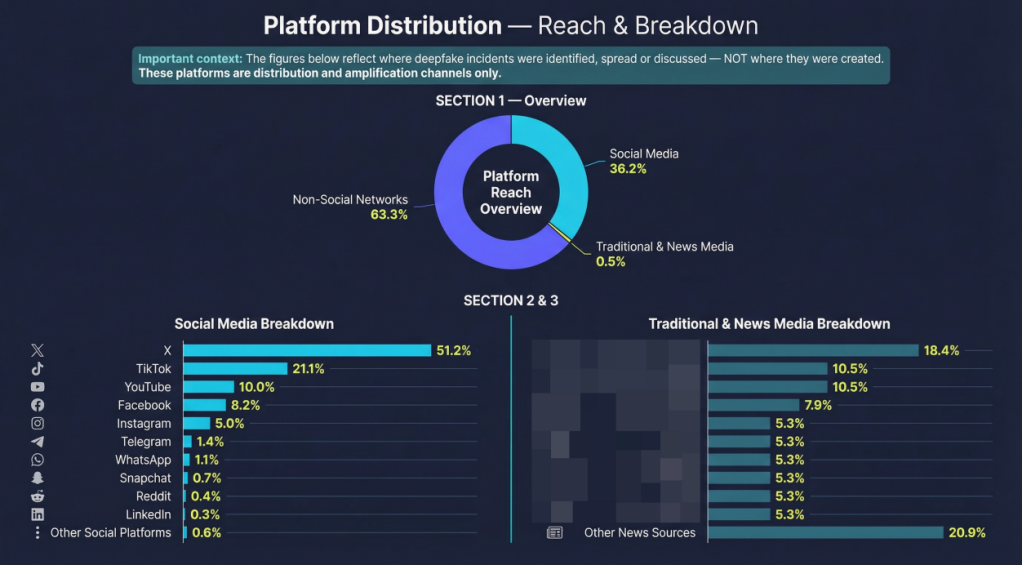

According to IdentifAI’s report, more than a third of deepfakes are distributed on social media, with X accounting for 51.2% of the social media platforms where deepfakes incidents were identified, spread or discussed. Less than 1% is distributed by traditional and news media, according to the report, which anonymized media sources. And social media and tech companies have moved away from fact-checking content shared on their platforms — Meta discontinued its fact-checking program last year.

“Professional media has a real reputational risk, commercial and employment risk,” said Tom Bowman, an advisor for IdentifAI. “Only a small percentage of [the content analyzed is] not real, but the cost [to media companies] of what isn’t real is very high.”

More in Media

Google’s AI opt-out leaves publishers with a choice they can’t safely use

The CMA has, on paper, given publishers a right to refuse AI in search. But because it’s opt-out, and Google is slow-walking the data needed to judge the impact, that right is barely usable, publishers say.

YouTube’s AI remix push exposes a looming reckoning for the creator economy

YouTube’s Gemini Omni integration has highlighted some of the major problems generative AI poses in the creator economy.

Why creator Lola Torres prefers the stability of affiliate marketing over brand partnerships

Creator Lola Torres on the hustle of building her career in affiliate marketing, the challenge of creator programs, and more.