Save 35% on an annual Digiday+ membership. Ends June 5.

Some of the world’s largest advertisers are pushing for an overhaul in how the ad industry as a whole tackles the evolving problem of brand safety problem.

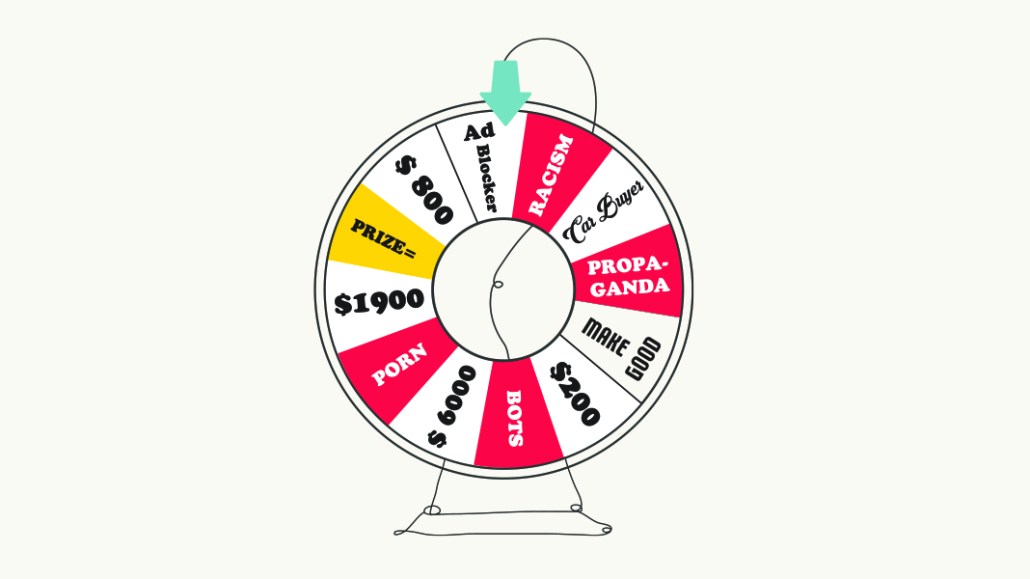

By now advertisers are well aware of the shortcomings of the current approach to brand safety. Block lists are blunt instruments that often sacrifice safety for nuance.

But for the brand safety issue to be successfully wrangled, global advertisers must insist that platform companies exercise more sophistication in tackling how ads are matched to the appropriate environments. In a bid to deliver brand safety, platform companies have focused on removing harmful content rather than helping advertisers target the content that’s suitable for their specific advertising purposes.

The likes of Google and Facebook must do more to help advertisers in the development of their own approach to targeting content that will be suitable for their ads to appear with, said Luis Di Como, Unilever’s, evp of global media. Di Como, along with other senior marketers in the World Federation of Advertisers’ Global Alliance for Responsible Media, plan to meet in April with executives of Google, Facebook and Twitter to discuss about the shift from brand safety to suitability.

Di Como and the rest of his group will try to convince executives from the platform companies to classify content according to more granular labels.

During the April meeting, the advertisers will present 11 definitions the platforms could use so as to consistently categorize harmful content. The definitions cover topics such as explicit content, drugs, spam, and terrorism. Their discussions will also focus on the content targeting tools that advertisers will need to gauge whether the content on these platforms is suitable for their ads, Di Como said.

“Now, we want the platforms to classify all of the granular inventory on their systems,” Di Como said. “This will [not only] help remove all the harmful content on those platforms but also help advertisers think more clearly about the concept of brand suitability.”

Since advertisers discovered in 2017 their ads had effectively funded terrorist videos on YouTube, both Google and Facebook have cracked down on what they have deemed to be “harmful content” that was uploaded to their platforms. From July to September 2019, an estimated 620 million pieces of harmful content were removed by YouTube, Facebook and Instagram combined, according to numbers seen by the World Federation of Advertisers. But the crackdown on harmful content has not stopped the problem. About 9.2 million pieces of harmful content were still seen by people on these platforms over the three-month period.

“We’re quantifying all of this [harmful content] in order to have a common consensus on the content we don’t want to fund and then remove it from the platforms,” said Di Como. “There are many other content definitions out there that some advertisers will believe is right for their brands in some markets, while others won’t.”

Suitability and misinformation are much tougher to police than extremist or graphic content, said Neil Peace, client partner at media agency Universal McCann. “The all-powerful online platforms that host this content and monetize it so successfully must take the lion’s share of responsibility and hold themselves as publishers to similar standards as the mainstream media,” Peace said.

More in Media

Why creator Lola Torres prefers the stability of affiliate marketing over brand partnerships

Creator Lola Torres on the hustle of building her career in affiliate marketing, the challenge of creator programs, and more.

Media Briefing: Perplexity’s new ‘trust and transparency’ pitch does little to win over publishers

Perplexity wants to be a trusted partner to publishers, but a growing list of copyright lawsuits are making that a difficult sell.

The case for and against publishers buying paid traffic

For many audience development teams, the question is no longer whether to buy traffic, but how far they can push it.