Lock in a year of Digiday+ for 35% less. Ends June 5.

With conspiracy-peddling sites under the microscope, Taboola yanks its content ads off Infowars

Another online conspiracy, this time surrounding the Parkland, Florida, school shooting, has provided an ugly reminder of tech’s role in helping spread false or misleading information.

Infowars has arrived in the spotlight for a video from founder Alex Jones alleging the shooting was a “deep state false flag operation.” YouTube pulled the video and warned Infowars it faces a ban if it has further violations. The move highlights the pressure distribution platforms like YouTube and Facebook face when it comes to controversial content. The same pressure is on monetization platforms used by sites like Infowars.

Infowars was running a native ad widget from Taboola. Taboola said it would remove the widget after being alerted to it by Digiday on Feb. 21. The Infowars situation was due to a partnership with a third party that was unaware of Taboola’s policy to not work with publishers that intentionally deceive or harm consumers, said Adam Singolda, CEO of Taboola.

“This is an oversight of our policy not extending properly to some distribution partners, for which we take responsibility,” Singolda said. “Following this discovery, we have since asked to remove ourselves from Infowars through that partner and will work with all of our third-party partners [so] that all of our policies are implemented throughout.” Singolda added that Taboola has 30 full-timers working on preventing the spread of misinformation and fake news.

Videos and posts promoting a theory that David Hogg, a student who’s been outspoken about the school shooting, was a “crisis actor” appeared on YouTube and Facebook before those companies removed them, showing even the tech giants’ efforts to clean up their sites aren’t error-proof.

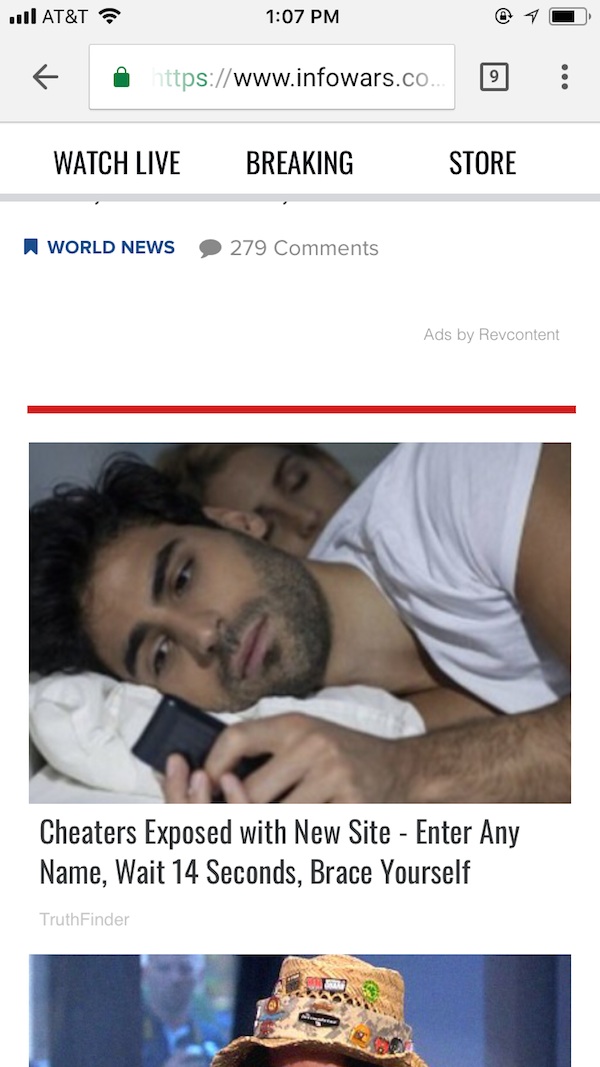

The conspiracy also shows there are still plenty of other ad tech tools for sites that promote such misinformation to find an audience and monetize. Revcontent, a content-recommendation network based in Sarasota, Florida, also has its widget displayed at the bottom of articles on Infowars, per the mobile screenshot below.

A spokesman for RevContent said the company does provide ads to Infowars but “still have yet to be given any links that violate our extremely stringent terms with regards to editorial process.”

“YouTube, Facebook, Google, Twitter are all working on this and doing a good job, but the burden should not just be on the big guys,” said Marc Goldberg, CEO of Trust Metrics. “All these other third parties who are able to monetize these pages, advertisers have to stop funding them. You can plug into different exchanges, and if no one identifies you as a hate site, you can make money today. If we expect change, it can’t just be from the top.”

In a newly released Integral Ad Science survey of agencies, publishers and advertisers, 57.6 percent listed ads delivered within risky content as their second-biggest concern with programmatic, after ad fraud.

There’s been some progress in the digital ad ecosystem. Ad fraud is declining as advertisers get better at weeding it out, according to the Association of National Advertisers. Advertisers are pressuring the platforms to clean up their act because they realize that declining public trust in social media can rub off on the advertisers that use those platforms. Many of the fake-news sites that proliferated during the 2016 election are no longer around.

Increasingly, there’s an acceptance that there will always be a certain amount of controversial content that finds an audience and funding, though. Marcus Pratt, vp of insights and technology at Mediasmith, said the agency is increasingly using whitelists in automated ad buying, which helps eliminate unsafe sites. But advertisers are more concerned with violence and nudity than politically controversial content, and tech companies that don’t have a public image have less motivation to make sure they’re not associated with such content.

Barry Lowenthal, president of The Media Kitchen, threw out the idea that advertisers put a disclaimer on their ads saying they don’t endorse the surrounding content, which could pressure the platform in question to police the content. At some point, though, the question becomes how “safe” can the internet really become, given freedom of expression is a value, too.

“You’re never going to remove everything bad in the world. Should we prevent everyone with a divisive opinion to express it?” he said.

More in Media

Publishers quietly cut ‘six-figure’ deals via Snowflake’s AI licensing platform

Publishers are starting to make meaningful AI licensing deals via Snowflake’s RAG pipe, with some securing several six-figure deals with financial institutions.

Vox Media CRO Geoff Schiller joins Screenvision as CEO

Vox Media CRO Geoff Schiller will become CEO of cinema ad firm Screenvision, setting sights on Gen Z and a strong pipeline of movies.

Brands are getting creative as fuel costs raise shipping fees

UPS now has surge emergency fees for goods coming from India, China and Hong Kong to the United States.