In graphic detail: The long road to accountability for social media platforms

Last week’s trials marked a turning point for social media. For the first time, big tech giants were held accountable for causing harm to children – and there were consequences.

First, there was the New Mexico case against Meta, in which a jury found the platform liable for violating the State’s Unfair Practices Act, and the company was fined $375 million. Then there was the California case –based on social media addiction – for which a jury found both Meta and YouTube liable for negligence, with Meta 70% responsible for the harm caused, and ordered to pay $4.2 million in damages while Alphabet (on behalf of YouTube), had to fork out $1.8 million.

Together, these rulings signal a fundamental shift – where they’ll be judged not just on the content they host, but how the platforms themselves are built and designed. And that could impact their growth, future revenue, and more regulations.

Digiday charted the long road that led to the verdict.

Under-age kids are using the platforms

Australia’s move to introduce a blanket under-16s social media ban reflects the scale of underage usage – and the growing concern over how platform design, from infinite scroll to recommendation algorithms, keeps younger users engaged.

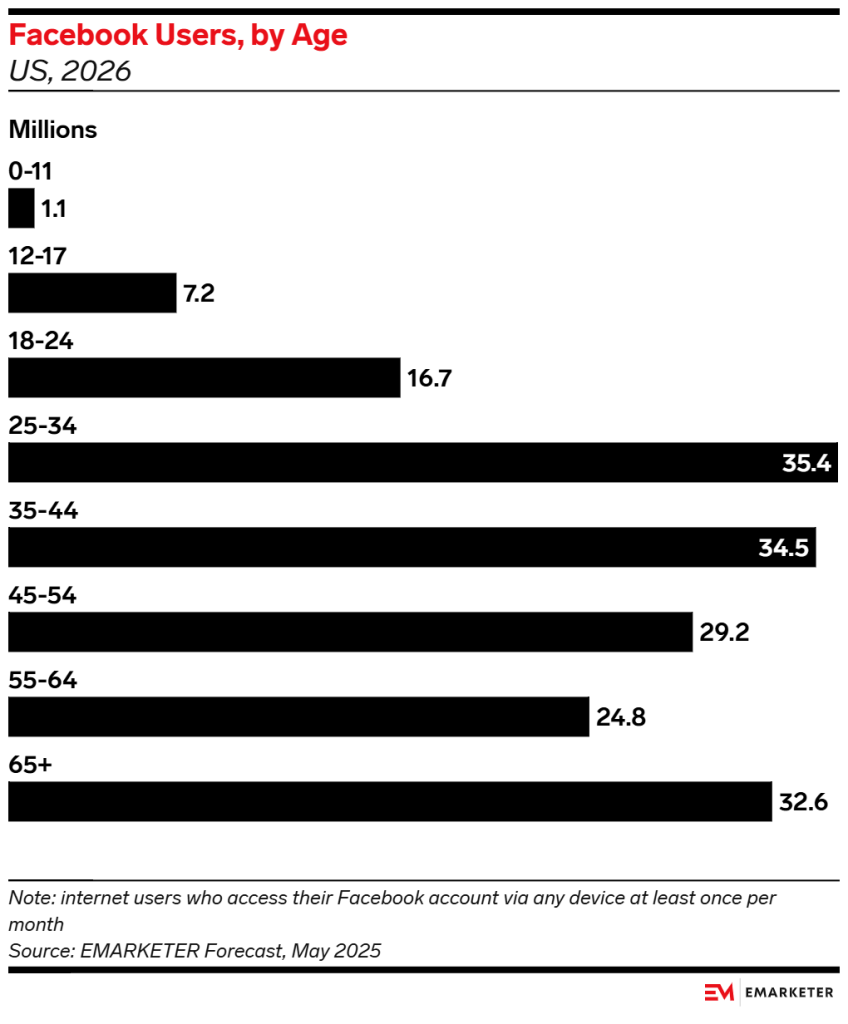

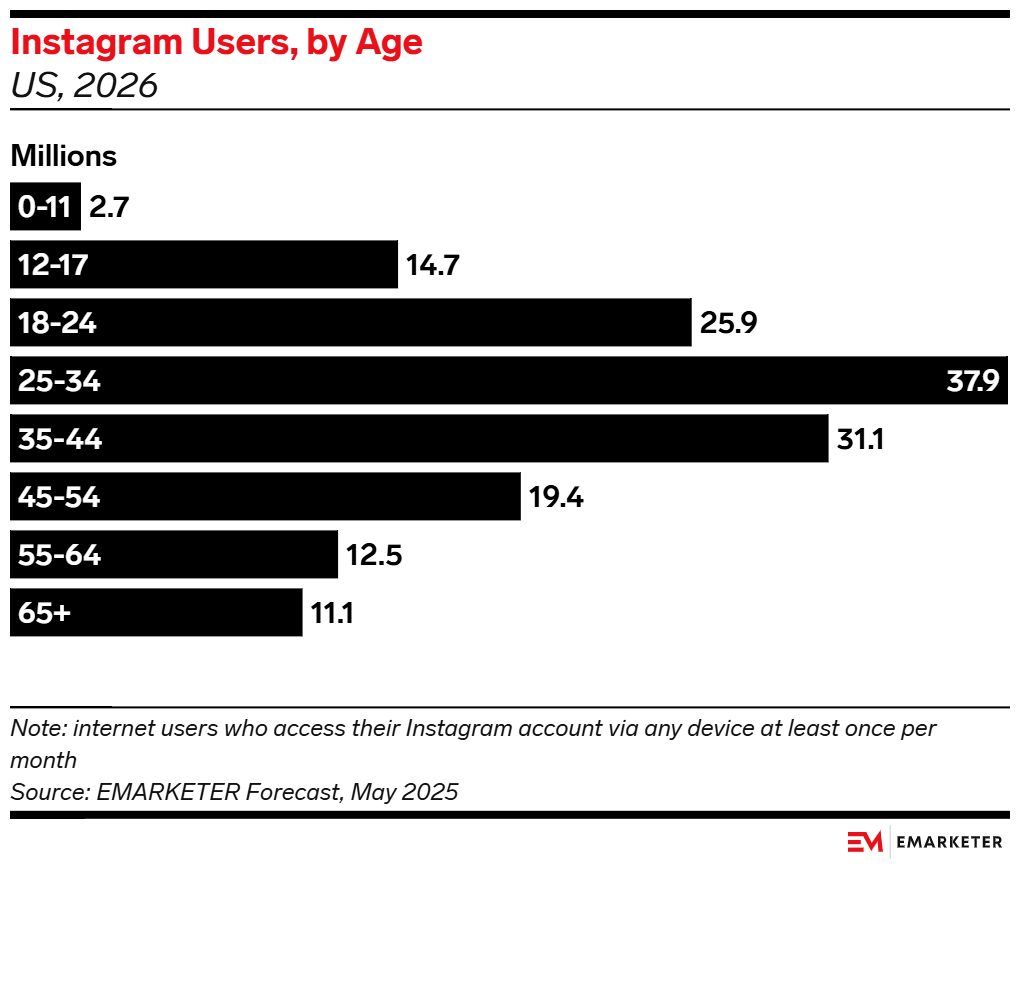

It’s an open secret that Meta products are used and viewed by kids under the age of 13 – the minimum age required to sign up to the app. To put it plainly, eMarketer has reported that while the bulk of their users in the U.S. are aged 18 and above, there’s still 8.3 million users under the age of 17 on Facebook and 17.4 million on Instagram.

Broken down: on Facebook, 1.1 million kids are aged up to 11 years old, and 7.2 million are aged between 12 and 17. On Instagram, 2.7 million children are aged up to 11 years, while 14.7 are aged between 12 to 17.

Social media tops time spent across any media types

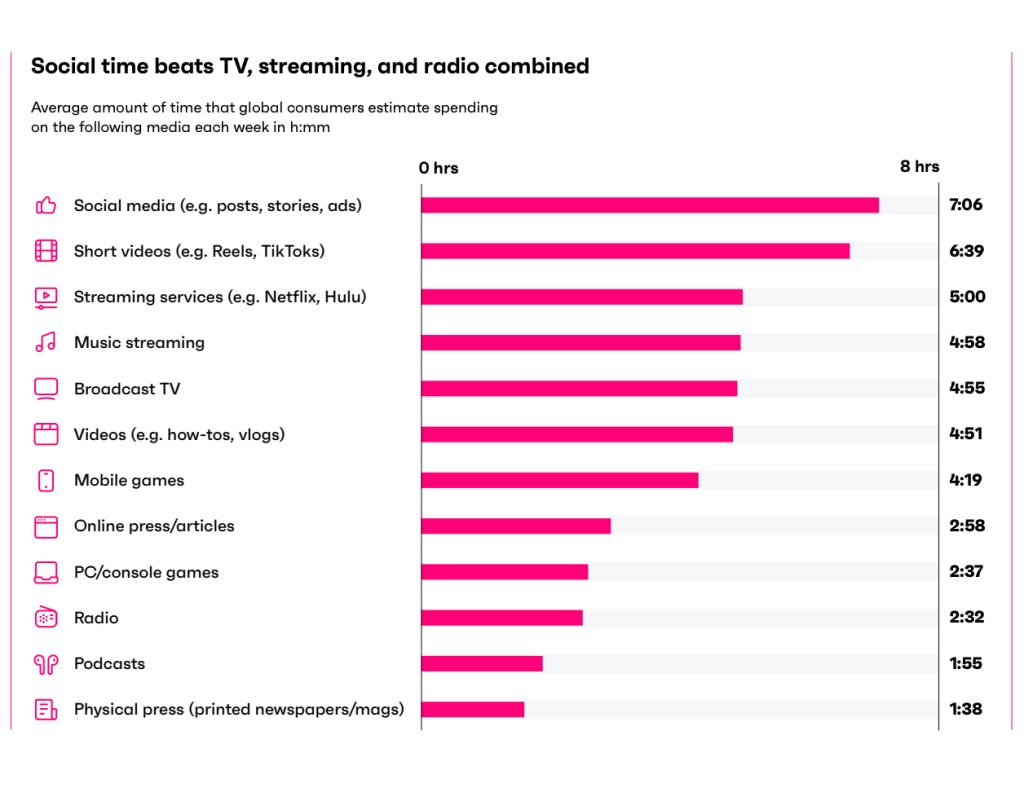

Since social media already dominates how people spend a lot of their time, regulators are increasingly focused on how those behaviors take shape from an early age – whether the platforms are shaping, rather than serving, those user habits.

According to GWI’s Connecting the Dots 2026 report, on average, global consumers spend about 7 hours and 6 minutes per week on social media overall, and around 6 hours 39 minutes on short-form videos (Reels and TikTok) per week.

Gen Z has reduced their social media use as a result

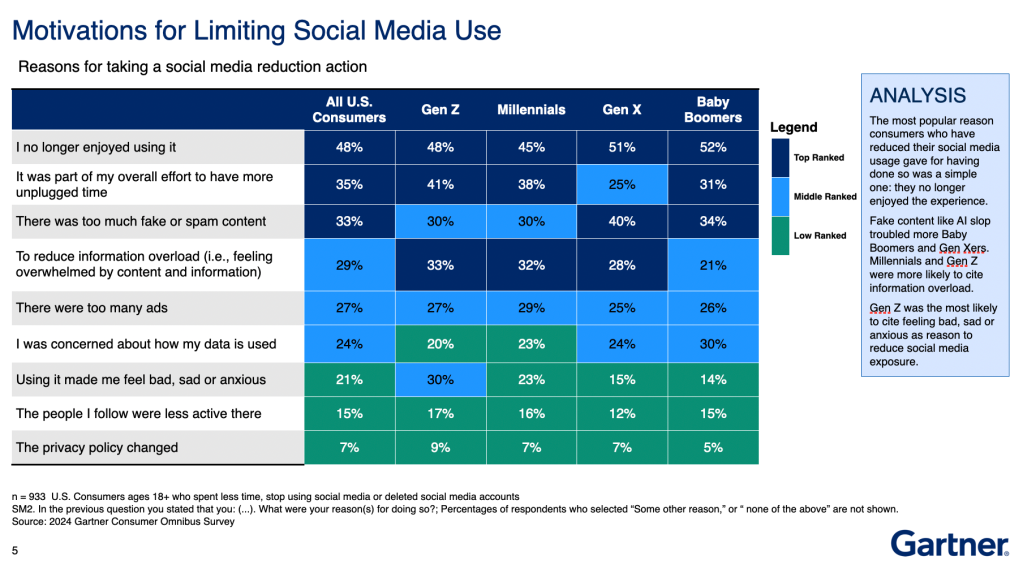

With Gen Z generally being “always on” social media, as well as the primary target audience for brands, it’s no wonder that over time they’ve become overwhelmed. And the stats prove it.

While consumers across all ages have taken various steps to reduce their social media usage, according to Gartner, 53% of Gen Z has decreased their time on the platforms.

When looking specifically at the reasons behind their decision, Gartner found that across all ages, the biggest cited reason was no longer enjoying using it. In particular, Gen Z also cited information overload, and the fact that using the platforms made them feel bad, sad or anxious.

Consumers are feeling more negative about the platforms than they ever had

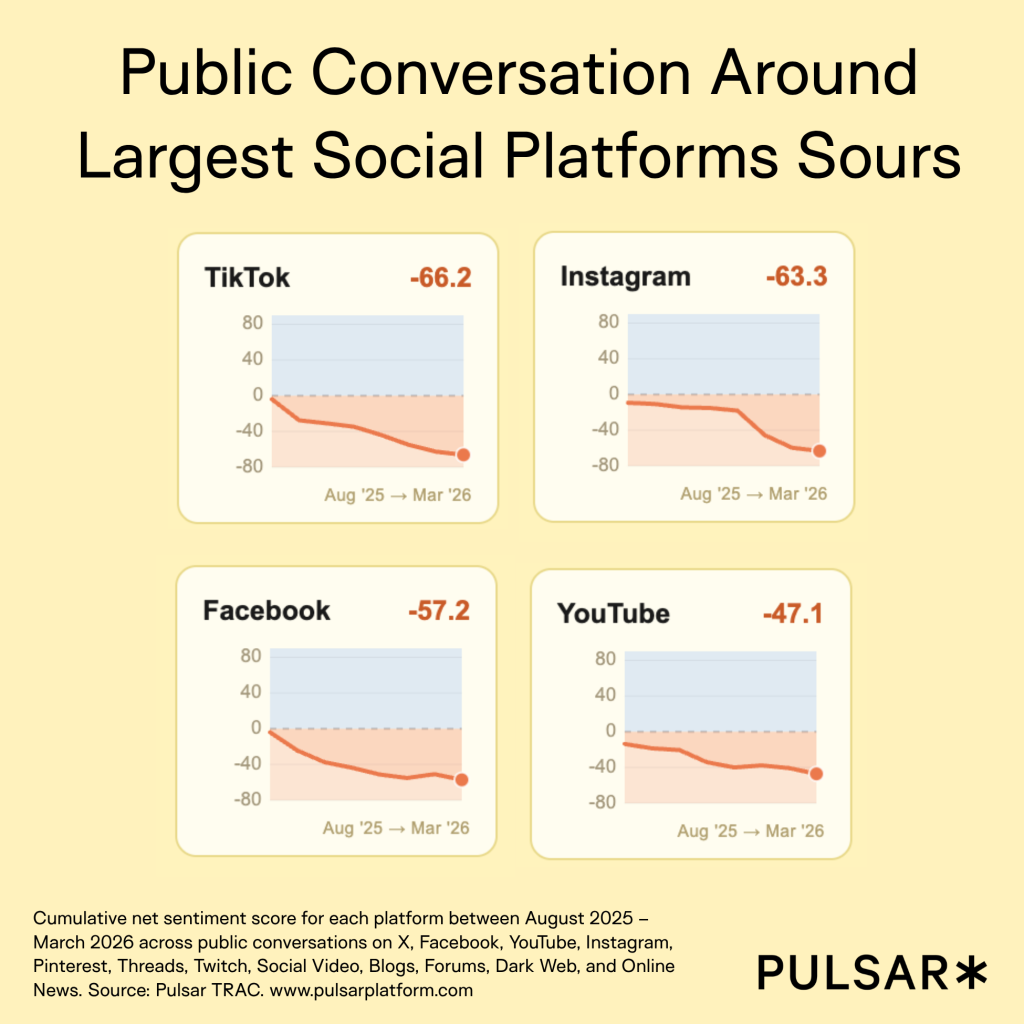

According to Pulsar, which tracked sentiment of the major platforms between July 2025 and March 2026, public conversation soured.

“There are many different opinions and narratives driving this from conversations about monetization practices, to ‘AI slop’, to the mental health impact on younger users. But of course the legislative and judicial conversations happening around the world are having an impact as well,” said Dahye Lee, marketing research lead at Pulsar.

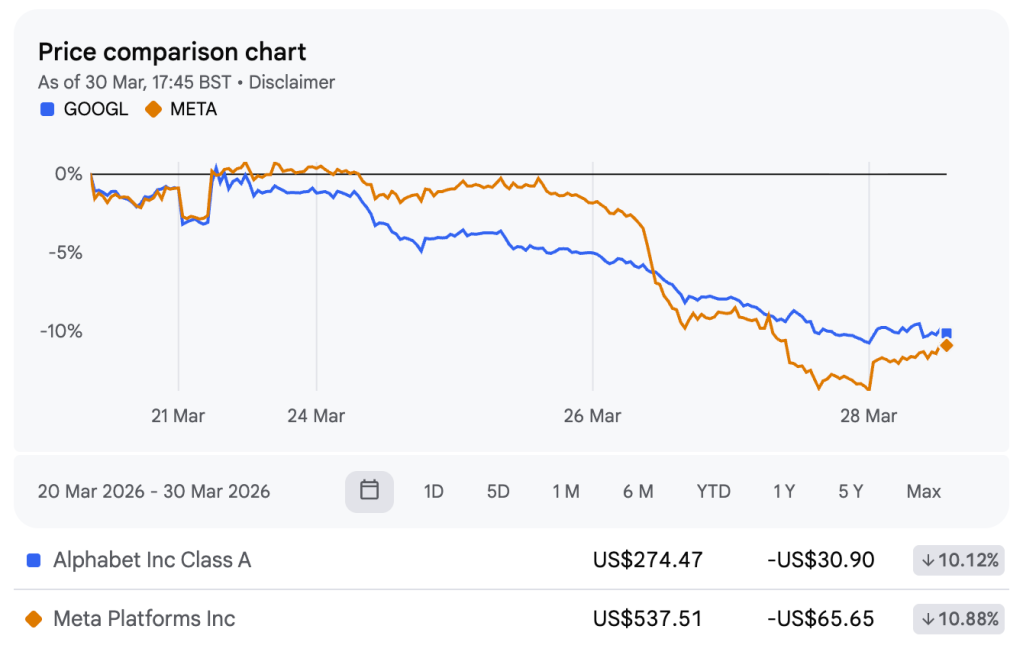

The moment the market lost confidence

Following the L.A. verdict, Meta and YouTube (Alphabet) saw their stocks tank by about 8% and 3%, respectively. It was a huge loss for Meta, which was compared to Big Tobacco in the 1990s. And investors realized Meta’s protective legal shield might be ending.

Until now, tech platforms have always been protected by Section 230 – which states platforms aren’t responsible for third-party content on their apps. But the California social media addiction trial ruled that it wasn’t necessarily the content that caused harm, but instead the app design (features like the infinite scroll and push notifications) which caused the addictive behaviors to take place.

Jayne Conroy, partner at Simmons Hanly Conroy, who was part of the plaintiff team in the California trial, said that the likes of Meta and YouTube et al have “no incentive” to protect minors on their platform.

Right now, Meta and YouTube can identify minors with a reasonable degree of certainty and show them targeted ads, she said. But if the platforms were to adopt the stricter age verification rules set out in the U.K. and France, for example, “YouTube [alone] could lose about $4 billion a year” because it wouldn’t be able to effectively advertise to minors anymore, Conroy said. “They have no incentive to protect those kids until there’s some sort of State or federal law that requires them to do that.”

Commenting specifically about the California trial, Google spokesperson Jose Castañeda, said: “We disagree with the verdict and plan to appeal. This case misunderstands YouTube, which is a responsibly built streaming platform, not a social media site.”

More in Marketing

What AI disruption means for experimental ad budgets

The 2026 ad budget is now a lab experiment as marketers boost experimental budgets for AI and emerging channels.

AI influencer discovery tools are changing how agencies cast creators

As creator spending grows among brand advertisers, agencies are using AI to automate much of the influencer marketing workflow. Now, that includes casting.

Pinterest bets measurement and SMBs will boost performance revenue

Vik Gupta (vp, gm of monetization) has been tasked with strengthening Pinterest’s push to become a permanent media plan fixture.